I watched the first lecture on Deep Learning Basics from the MIT course 6.S094: Deep Learning for Self-Driving Cars and these are the highlights of the lecture.

Slides for this lecture: https://bit.ly/deep-learning-basics-slides

Website: https://deeplearning.mit.edu/

GitHub repo with tutorials: https://github.com/lexfridman/mit-deep-learning

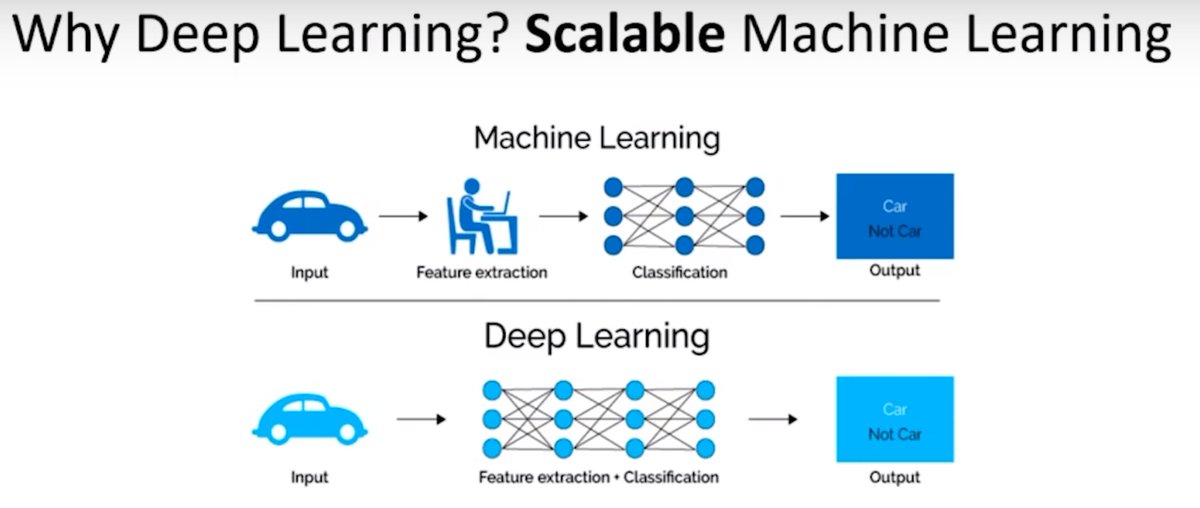

Why Deep Learning? No need of human expert involvement.

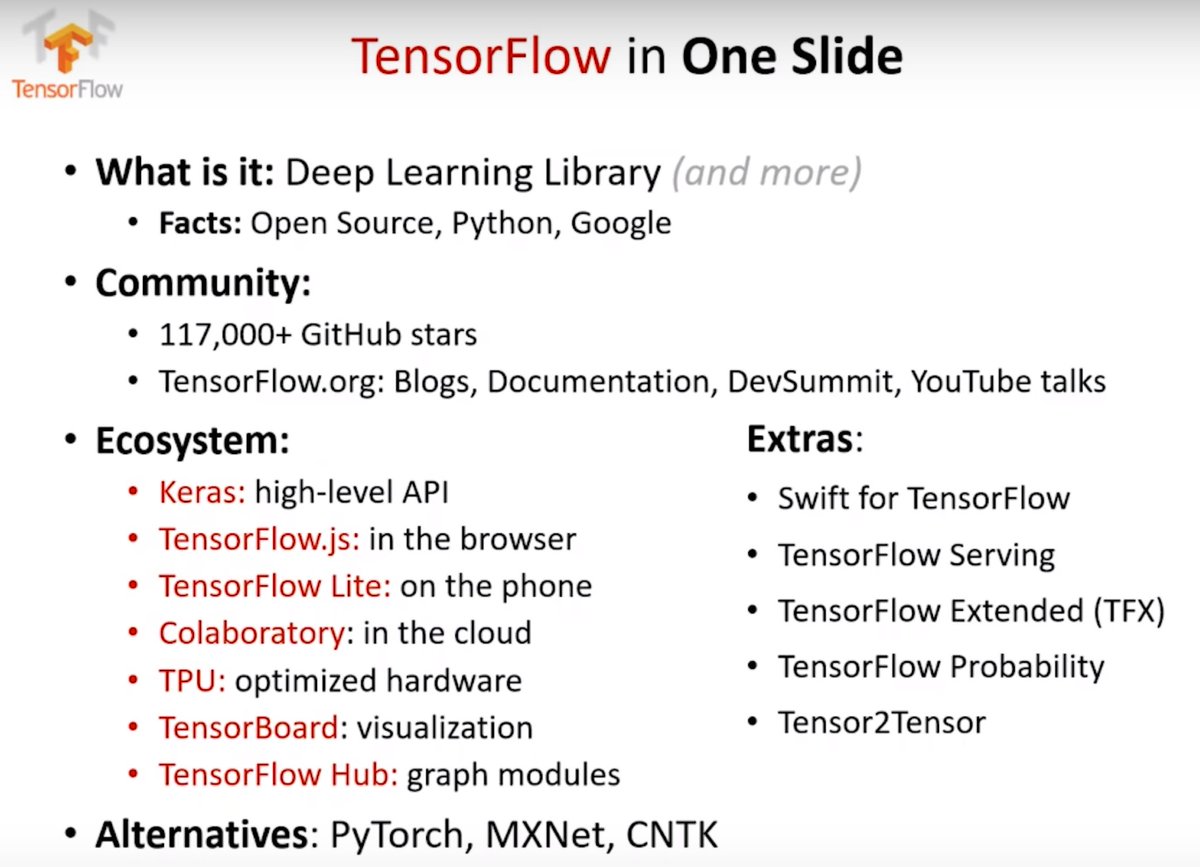

Great overview of the TensorFlow ecosystem.

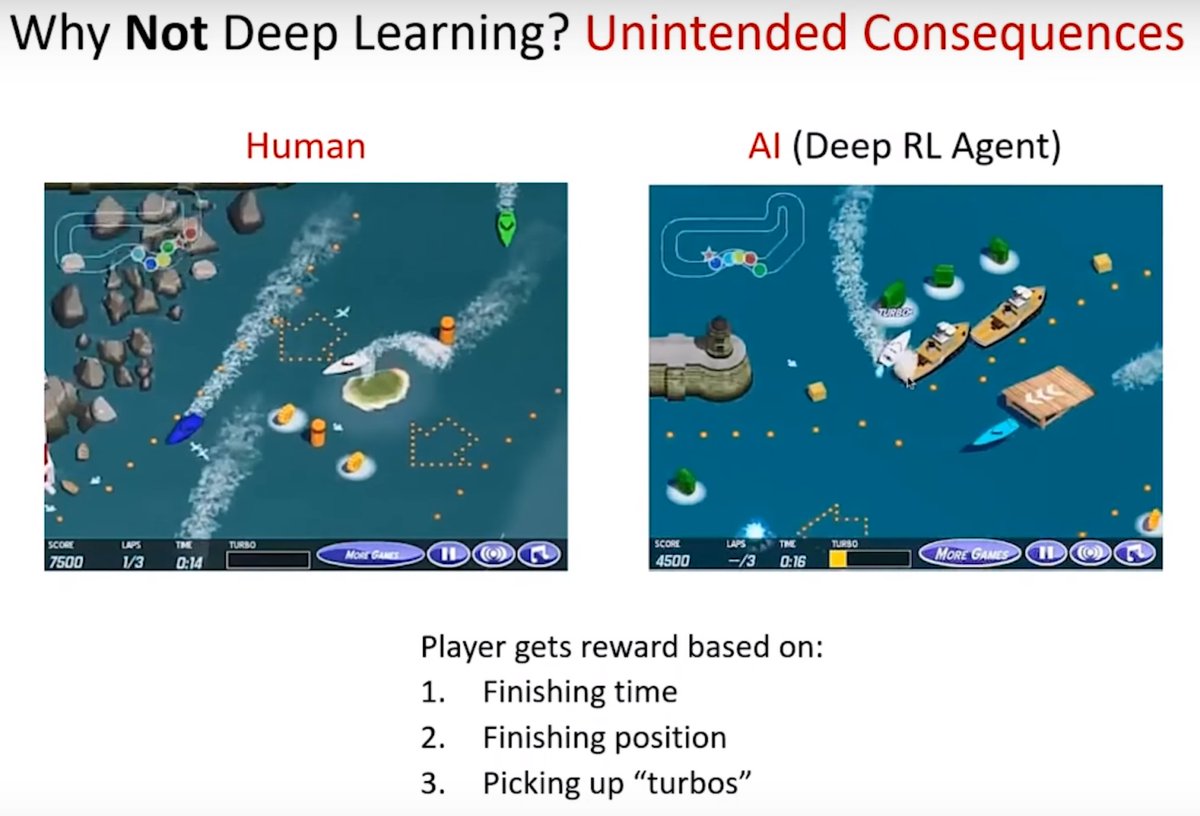

The Deep RL agent discovers that the optimal has nothing to do with finishing the boat race or the ranking but they can get much more points by just driving in circles to collect the turbos because they regenerate.

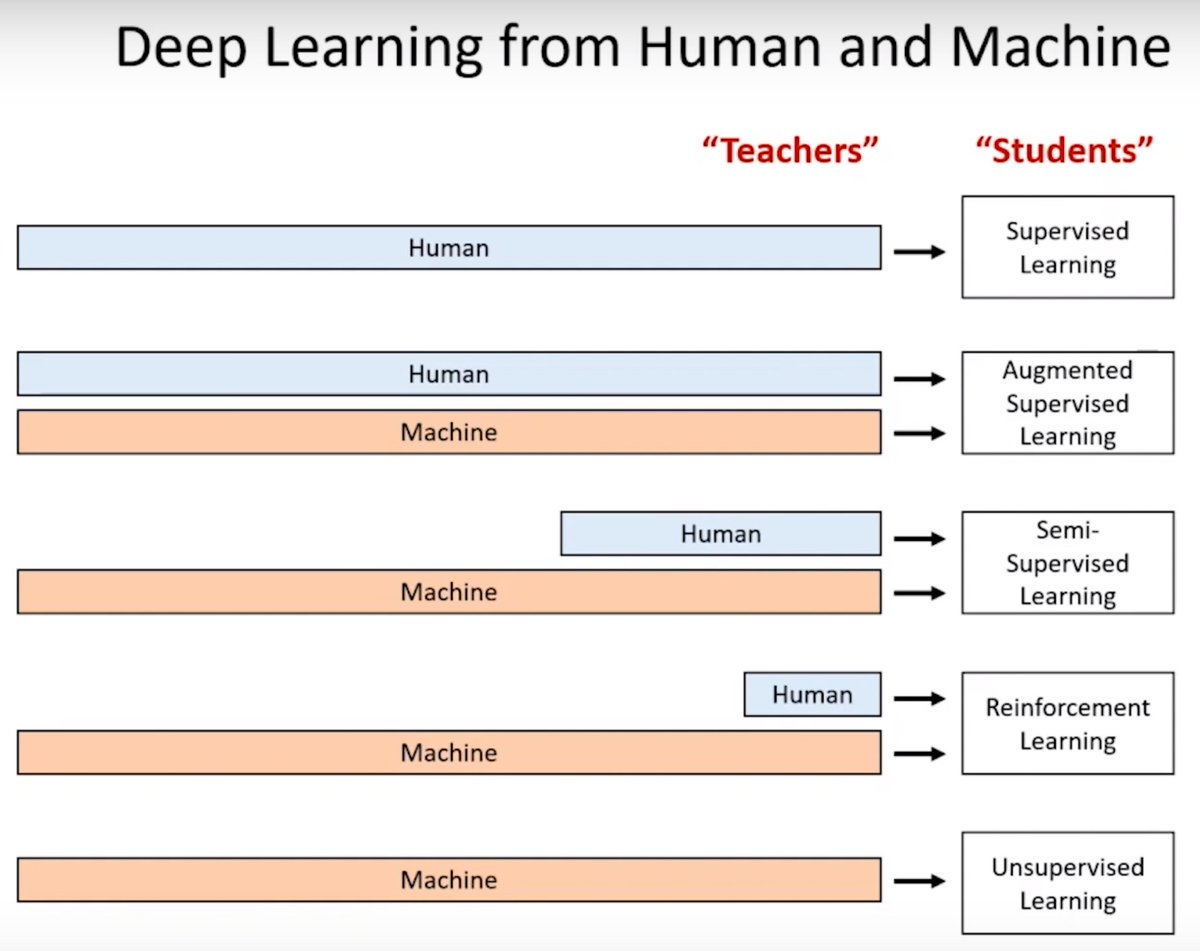

The challenge before us is to solve huge real world problems with less and less human supervision.

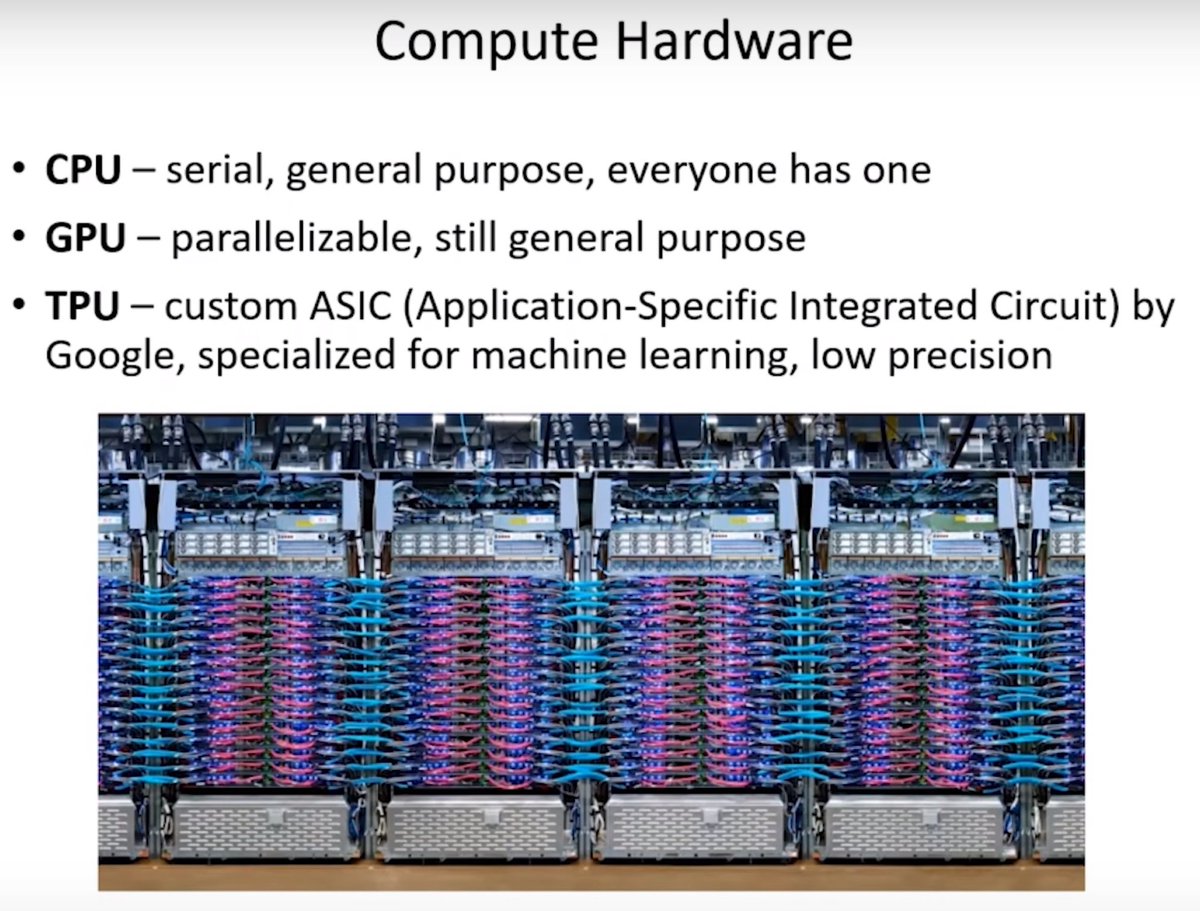

The parallelizability of neural networks is what enables some of the exciting advancements on the GPU and with ASIC TPUs.

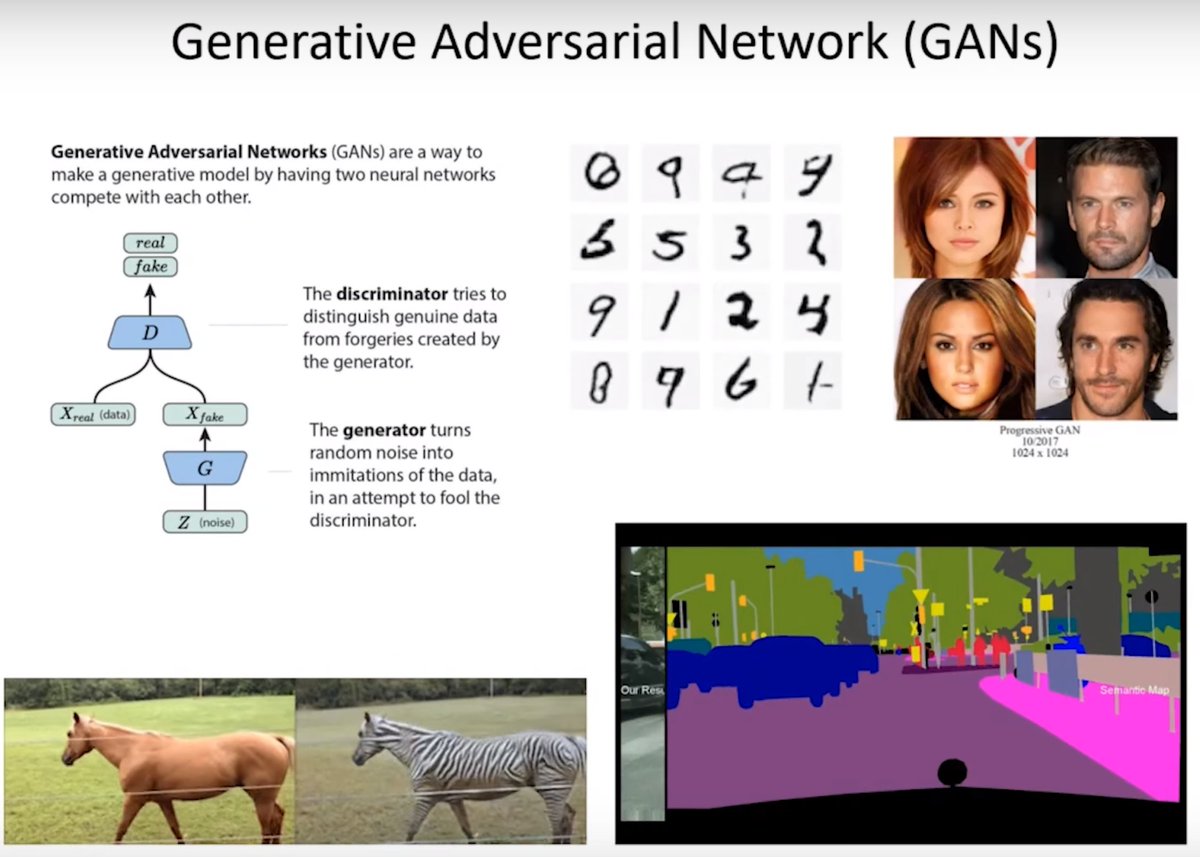

Two networks compete against each other in order for the generator to get better at generating realistic images and to trick the discriminator that has to distinguish between real images and those generated by the generator. Both get better together.

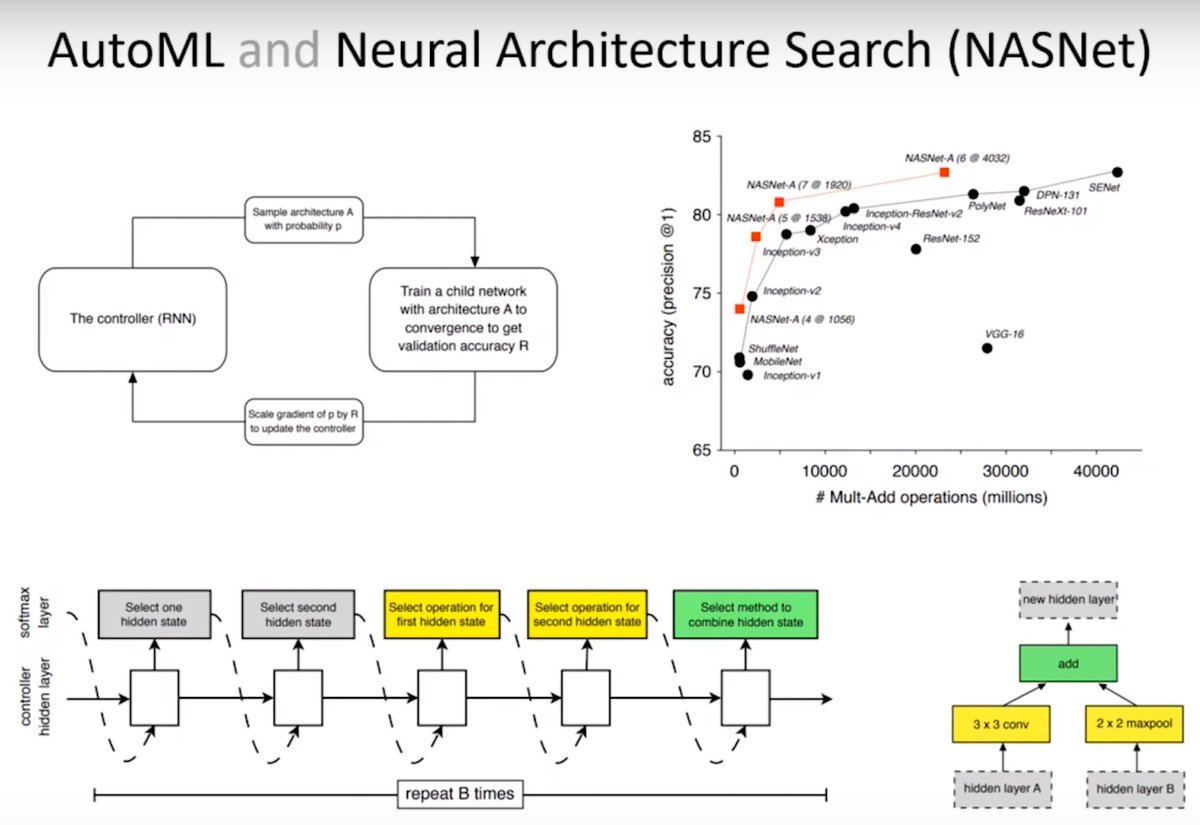

Instead of putting the lego pieces together yourself, you bring the dataset and AutoML tells you what kind of neural network will do better with this particular dataset. This helps you focus on what is the right question and what is the data to solve that question.

Deep reinforcement learning. Learn how to behave in the world by observing the state and reward when accomplishing an action successfully.

DeepTraffic is a deep RL competition open to all with the goal to create a neural network to drive one or more vehicles as fast as possible through dense traffic.

Website: selfdrivingcars.mit.edu/deeptraffic/

Paper (arXiv): arxiv.org/abs/1801.02805

GitHub: https://github.com/lexfridman/deeptraffic